What Is Quantization

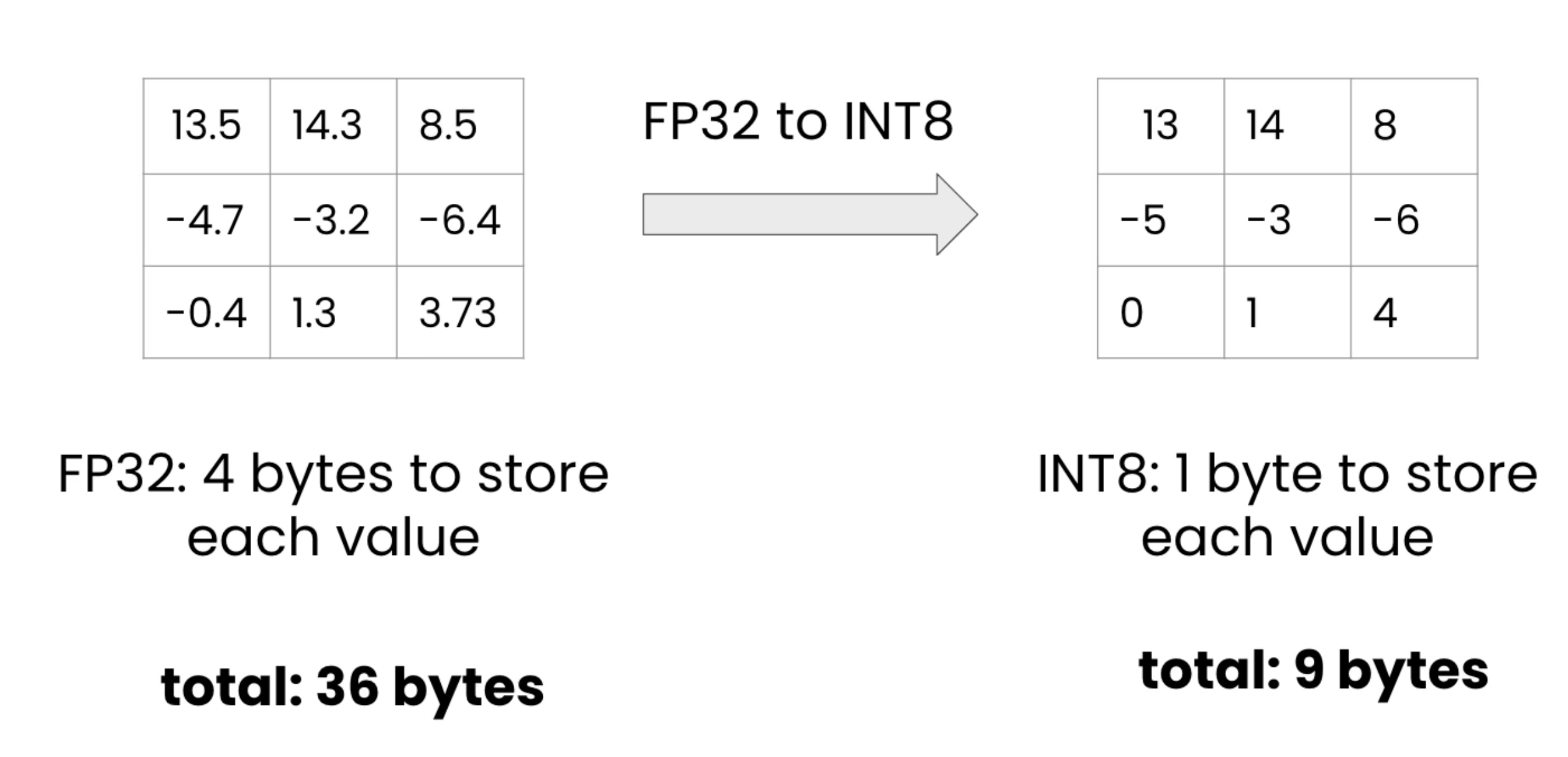

Quantization is used to lower the memory requirements to load and use a model by storing the model weights in lower precision while trying to preserve as much accuracy as possible.

Model weights are typically stored in full-precision (fp32) floating point representations, but half-precision (fp16 or bf16) representations are becoming popular given the huge size of models today.

Some quantization methods can further reduce the precision to integer representations, like int8 or int4. You can read more about data types for ML on my blogpost.

There is a table in documentation by Hugging Face on Quantization, which can help you pick a quantization method (available in Transformers) based on your hardware and what precision (number of bits) you want to quantize to.